State by State Data Breach Map

Where data breach regulations are in force in your state?

Check out the Snell & Wilmer interactive, state by state Data Breach Map.

Where data breach regulations are in force in your state?

Check out the Snell & Wilmer interactive, state by state Data Breach Map.

Here we are, four days beyond May 25th – the date when enforcement of the Global Data Protection Regulation was to begin.  So far, no planes have fallen from the sky (remember dire Y2K warnings?) and no specific enforcement actions by the EU have been announced. Privacy activist Max Schrems’ organization noby.eu immediately filed $8.8 billion in lawsuits against Facebook and Google. But what of the EU regulators?  What are their plans?

Only time will tell. Â I get the feeling that what will happen with GDPR enforcement is kind of like the Super Bowl. Â There has been incessant conversation and speculation leading up to May 25th, and now the game has begun. Â It will be played out on the field over the next months and years. Â Then we will really know what will happen.

An Dark Reading article, “GDPR Oddsmakers: Who, Where, When Will Enforcement Hit First?â€, includes some interesting speculation and advice from privacy experts.  I particularly like a comment in the article by  says Dave Lewis, global security advocate at Akamai Technologies.Â

There has been an inordinate amount of focus on the potential fines. The reality is that GDPR is very much a push towards ensuring the accountability of the data for which [companies] are stewards.

If that accountability really improves, we should cheer GDPR, not live in fear of its dire consequences.

My two cents …

Stewardship: “the management or care of something, particularly the kind that works.†(Vocabulary.com)

I think my favorite new term in the business vernacular is “Data Stewardship.† I like how vocabulary.com emphasizes that good stewardship leads to things that work.

Extending the concept of stewardship to management of data, a recent article in AnalyticsIndia states:

One of the simplest definitions of data steward comes from the problem statement posed by authors Tom Davenport and Jill Dyché in their 2013 research study, ‘Big Data in Big Companies’:

“Several companies mentioned the need for combining data scientist skills with traditional data management virtues. Solid knowledge of data architectures, metadata, data quality and correction processes, data stewardship and administration, master data management hubs, matching algorithms, and a host of other data-specific topics are important for firms pursuing big data as a long-term strategic differentiator.â€

The article defines four major areas of responsibility for a data steward:

The third area in this list strikes particularly close to home. Â I like the fact that security and privacy are considered to be vital components of data stewardship. Â I firmly believe they make data work (as vocabulary.com suggests).

This morning, I reviewed a proposal for improving a company’s security against data breach.  The main reasons giving for the investment in security technology were:

These are all valid reasons for making the proposed investment, but shouldn’t there be more? Doesn’t good security support good business results in a positive way?

By happy coincidence, just before I reviewed the proposal, I read a thought-providing article, “Reframing Cybersecurity As A Business Enabler,†published by Innovation Enterprise.  The introductory paragraph states the obvious:

Innovation is vital to remaining competitive in the digital economy, yet cybersecurity risk is often viewed as an inhibitor to these efforts. With the growing number of security breaches and the magnitude of their consequences, it is easy to see why organizations are apprehensive to implement new technologies into their operations and offerings. The reality is that the threat of a potential attack is a constant.

But rather than dwelling on the problem, this article challenges traditional thinking:

Though the threat is real, instead of viewing cybersecurity in terms of risk, organizations should approach cybersecurity as a business enabler. By building cybersecurity into the foundation of their business strategy, organizations will be able to support business agility, facilitate organizational operations and develop consumer loyalty.

The article explores each of these three business value areas in more detail. I have included a brief excerpt in each area:

Security supports business agility

Instituting strong security measures enables organizations to operate without being compromised or slowed down. Companies that invest in cyber resilience will be better able to sustain operations and performance – a definite competitive advantage over those caught unprepared by an attack.

Security facilitates business productivity

One survey of C-level executives revealed that 69% of those surveyed said digitization is ‘very important’ to their company’s current growth strategy. 64% also recognized that cybersecurity is a ‘significant’ driver of the success of digital products, services, and business models.Â

Security develops customer loyalty

PricewaterhouseCoopers’ 21st Global CEO Survey found that 87% of global CEOs say they are investing in cybersecurity to build trust with customers.Â

I recognize the need for strengthening security defense mechanisms for the sake of risk mitigation. However, if we restrict ourselves to the traditional “security as insurance policy†mindset, we are missing the greater value of good information security in supporting positive business success.Â

Justifying investment in security technology is tough. Because it is difficult to measure ROI for Security controls, many companies justify security investments in terms of risk reduction.

Recognizing this difficulty, Slavik Markovic, CEO of Demisto, proposes “10 best practices for bolstering security and increasing ROI.†He introduces the topic in part, with this statement:

CISOs are being asked to provide proof that the money spent [on security] — or that they are asking to be spent — will lead to greater effectiveness, more efficient operations or better results.

Rather than proposing a specific ROI calculation method, Markovic recommends taking a more holistic approach to the problem. Â His recommended 10 best practices are:

I recommend that you read the commentary supporting each best practice.

Two of these recommendations entail leveraging technology to expand the expertise and capabilities of security professionals, rather than relying on just hiring more expensive staff:

3. Automate and orchestrate. Strive for security orchestration and process automation. The current threat landscape is vast, complex and constantly changing. Even a well-staffed security operations center cannot keep pace with the volume of alerts, especially with the ever-increasing number of duplicates and false positives. Use automation for threat hunting, investigations and other repetitive tasks that consume too much of analysts’ time.

6. Leverage analytics. Adopt advanced analytics. Machine learning and artificial intelligence are delivering truly innovative solutions. CISOs should research these two fields carefully to determine which analytics tools best fit their agencies, taking into account the organization’s strengths and weaknesses related to skills, personnel and risks.

I like the way Markovic looks broadly at how to justify security investment. Â Security, after all, touches almost every aspect of modern business, and is strategically vital to business success.

This morning, I was delighted to finally download and read the new “Oracle and KPMG – Cloud Threat Report 2018.† I have known this was coming for a few months, but was delighted by how it turned out.  The report contains a wealth of timely, insightful information for those who need to know how to not only cope, but excel, in the rapidly changing information systems infrastructures of modern business.

Mary Ann Davidson, CSO, Oracle Corporation, stated in the report’s Foreword:

In the age of social media, it is popular to speak of what’s “trending.†What we are seeing is not a trend, but a strategic shift: the cloud as an enabler of security.

The dazzling insights in the Oracle and KPMG Cloud Threat Report, 2018 come not from professional pundits, but from troops in the trenches: security professionals and decision makers who have dealt with the security challenges of their own organizations and who are increasingly moving critical applications to the cloud.

A few key research findings are summarized in the following list and illustrated by the numbers the “Key Research Findings” chart:

KPMG offers this Call to Action:

C-level, finance, HR, risk, IT, and security leaders are responsible for ensuring that the organization has a cybersecurity program to address risks inherent in the cloud.

Beyond making sure that risks are mitigated and compliance requirements are addressed, leaders should accept and assert their responsibility for protecting the business. A critical first step is to understand the “shared responsibility†principles for cloud security and controls. Knowing what security controls the vendor provides allows the business to take steps to secure its own cloud environment.

To further protect an organization, it is crucial that everyone in the organization—not just its leaders—is educated about the cloud’s inherent risks and the policies designed to help guard against those risks. This requires clear communication and training to employees on cloud usage. KPMG and Oracle’s research found that there may be considerable room for improvement in this area, as individuals, departments, and lines of business within organizations are often in violation of cloud service policies.

I have really just skimmed the report. Â I look forward to digesting the content more completely. Â Stay tuned for more analysis and commentary from my perspective.

As I read a recent Risk Management Monitor article “Companies Must Evolve to Keep Up With Hackers,†I couldn’t help but think – at what cost?  Perhaps you can calculate the amount a company spends on tools and processes to defend against cyberattacks, and perhaps even justify that expense by attempting to estimate the cost of a data breach were it to occur.

But what about cost of lost opporutunity?  Has anyone tried to estimate how much time, attention and resources are diverted from managing and innovating in the core business to defend against cyberattacks? I would guess that such diversion robs more from the overall business than the more visible expenses that show up on a balance sheet – which is growing at an alarming rate.

So, Mr. or Ms. Hacker, whoever you are, you are robbing our society blind – in ways that are really tough to measure. Man up and do something productive for a change!

Â

P.S., Jerry Dixon, author of the article, is not related to me that I know of, but he writes a good article!

May 25, 2018 is bearing down on us like a proverbial freight train. That is the date when the European Union General Data Protection Regulation (GDPR) becomes binding law on all companies who store or use personal information related to EU citizens. (Check out the count down clock on the GDPR website).

Last week, Oracle published a new white paper, “Helping Address GDPR Compliance Using Oracle Security Solutions.”

Leveraging our experience built over the years and our technological capabilities, Oracle is committed to help customers implement a strategy designed to address GDPR security compliance. This whitepaper explains how Oracle Security solutions can be used to help implement a security framework that addresses GDPR.

GDPR is primarily focused on protecting fundamental privacy rights for individuals. By necessity, protection of personal information requires good data security. As stated in the white paper,Â

The protection of the individuals whose personal data is being collected and processed is a fundamental right that necessarily incorporates IT security.

In modern society, IT systems are ubiquitous and GDPR requirements call for good IT security. In particular, to protect and secure personal data it is, among other things, necessary to:

- Know where the data resides (data inventory)

- Understand risk exposure (risk awareness)

- Review and, where necessary, modify existing applications (application modification)

- Integrate security into IT architecture (architecture integration)

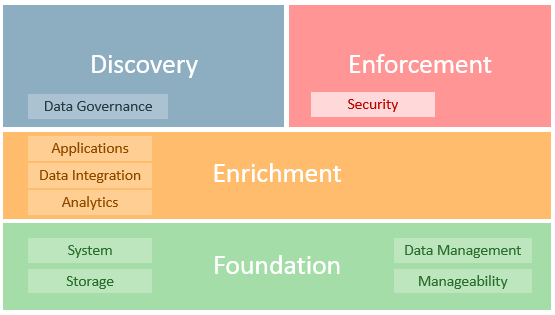

Oracle proposes the following framework toÂ

… help address GDPR requirements that impact data inventory, risk awareness, application modification, and architecture integration. The following diagram provides a high-level representation of Oracle’s security solutions framework, which includes a wide range of products and cloud services.

Â

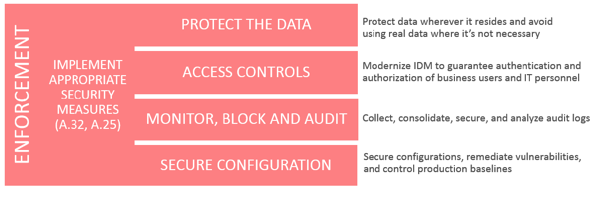

The paper primarily focuses on the “Enforcement†portion of this model, postposing that:

… four security requirements are a part of many global regulatory requirements and well-known security best practices (i.e. ISO 27000 family of standards, NIST 800-53, PCI-DSS 3.2, OWASP and CIS Controls).

In conclusion, the paper states:

The path towards GDPR compliance includes a coordinated strategy involving different organizational entities including legal, human resources, marketing, security, IT and others. Organizations should therefore have a clear strategy and action plan to address the GDPR requirements with an eye towards the 25 May, 2018 deadline.

Based on our experience and technological capabilities, Oracle is committed to help customers with a strategy designed to achieve GDPR security compliance.

Â

May 25, 2018 is less than ten short months away. Â We all have a lot of work to do.

Â

Â

Â

Eight years ago this month, I posted a short article on this blog entitled, Passwords and Buggy Whips.

Quoting Dave Kearns, the self proclaimed Grandfather of Identity Management:

Username/password as sole authentication method needs to go away, and go away now. Especially for the enterprise but, really, for everyone. As more and more of our personal data, private data, and economically valuable data moves out into “the cloud†it becomes absolutely necessary to provide stronger methods of identification. The sooner, the better.

I commented:

Perhaps this won’t get solved until I can hold my finger on a sensor that reads my DNA signature with 100% accuracy and requires that my finger still be alive and attached to my body.  We’ll see …

So here we are. Â Eight years have come and gone, and we still use buggy whips (aka passwords) as the primary method of online authentication.

Interesting standards like FIDO have been proposed, but are still not widely used.

I was a beta tester for UnifyID‘s solution, which used my phone and my online behavior as multiple factors.  I really liked their solution until my employer stopped supporting the Google Chrome browser in favor of Firefox. Alas, UnifyID doesn’t support Firefox!

We continue to live in a world that urgently needs to be as rid of passwords as we are of buggy whips, but I don’t see a good solution coming any time soon.  Maybe in another eight years?

Â

Â

This morning, I watched the launch webcast for the Oracle Identity Cloud Service a cloud native security and identity management platform designed to be an integral part of the enterprise security fabric.

This short video, shown on the webcast, provides a brief introduction:

Â